The individual approach

$ \DeclareMathOperator{\argmin}{arg\,min} \newcommand{\psis}{\psi{^\star}} \newcommand{\phis}{\phi{^\star}} \newcommand{\hpsi}{\hat{\psi}} \newcommand{\hphi}{\hat{\phi}} \newcommand{\teps}{\tilde{\varepsilon}} \newcommand{\limite}[2]{\mathop{\longrightarrow}\limits_{\mathrm{#1}}^{\mathrm{#2}}} \newcommand{\DDt}[1]{\partial^2_\theta #1} $

Contents

Models and methods

A model for continuous data:

- \(\begin{align} y_{j} &=& f(t_j ; \psi) + \varepsilon_j \quad ; \quad 1\leq j \leq n \\ &=& f(t_j ; \psi) + g(t_j ; \psi) \tilde{\varepsilon_j} \end{align}\)

- $f$ : structural model

- $\psi=(\psi_1, \psi_2, \ldots, \psi_d)$ : vector of parameters

- $(t_1,t_2,\ldots , t_n)$ : observation times

- $(\varepsilon_j, \varepsilon_2, \ldots, \varepsilon_n)$ : residual errors ($\Epsilon({\varepsilon_j}) =0$)

- $g$ : { residual error model}

- $(\tilde{\varepsilon_1}, \tilde{\varepsilon_2}, \ldots, \tilde{\varepsilon_n})$ : normalized residual errors $(Var(\tilde{\varepsilon_j}) =1)$

- Some residual error models

| - constant error model | $y=f+a\tilde{\varepsilon}$, | $g=a$ |

| - proportional error model | $y=f+bf\tilde{\varepsilon}$, | $g=b\, f$ |

| - combined error model 1 | $y=f+(a+bf)\tilde{\varepsilon}$, | $g=a+b f$ |

| - combined error model 2 | $y=f+\sqrt{a^2+b^2f^2}\tilde{\varepsilon}$, | $g^2=a^2+b^2f^2$ |

Some tasks in the context of modelling, i.e. when a vector of observations $(y_j)$ is available:

- Simulate a vector of observations $(y_j)$ for a given model and a given parameter $\psi$,

- Estimate the vector of parameters $\psi$ for a given model,

- Select the structural model $f$

- Select the residual error model $g$

- Assess/validate the selected model

Maximum likelihood estimation of the parameters: $\hat{\psi}$ maximizes $L(\psi ; y_1,y_2,\ldots,y_j)$

where

- \( L(\psi ; y_1,y_2,\ldots,y_j) {\overset{def}{=}} p_Y( y_1,y_2,\ldots,y_j ; \psi) \)

If we assume that $\bar{\varepsilon_i} \sim_{i.i.d} {\cal N}(0,1)$, then, the $y_i$'s are independent and

- \( y_{j} \sim {\cal N}(f(t_j ; \psi) , g(t_j ; \psi)^2) \)

and the p.d.f of $(y_1, y_2, \ldots y_n)$ can be computed:

- \(\begin{align} p_Y(y_1, y_2, \ldots y_n ; \psi) &=& \prod_{j=1}^n p_{Y_j}(y_j ; \psi) \\ \\ && \frac{e^{-\frac{1}{2} \sum_{j=1}^n \left( \frac{y_j - f(t_j ; \psi)}{g(t_j ; \psi)} \right)^2}}{\prod_{j=1}^n \sqrt{2\pi g(t_j ; \psi)}} \end{align}\)

Maximizing the likelihood is equivalent to minimizing the deviance (-2 $\times$ log-likelihood) which plays here the role of the objective function:

- \(\begin{align} \hat{\psi} = \argmin_{\psi} \left\{ \sum_{j=1}^n \log(g(t_j ; \psi)^2) + \sum_{j=1}^n \left( \frac{y_j - f(t_j ; \psi)}{g(t_j ; \psi) }\right)^2 \right \} \end{align}\)

and the deviance is therefore

- \(\begin{align} -2 LL(\hat{\psi} ; y_1,y_2,\ldots,y_j) = \sum_{j=1}^n \log(g(t_j ; \hat{\psi})^2) + \sum_{j=1}^n \left(\frac{y_j - f(t_j ; \hat{\psi})}{g(t_j ; \hat{\psi})}\right)^2 +n\log(2\pi) \end{align}\)

This minimization problem usually does not have an analytical solution for a non linear model. Some optimization procedure should be used.

Some specific models have specific solutions. For instance, for a constant error model:

- \( y_{j} = f(t_j ; \phi) + a \, \bar{\varepsilon_j}\)

we have

- \(\begin{align} \hat{\phi} &=& \argmin_{\psi} \sum_{j=1}^n \left( y_j - f(t_j ; \phi)\right)^2 \\ \\ \hat{a}&=& \frac{1}{n}\sum_{j=1}^n \left( y_j - f(t_j ; \hat{\phi})\right)^2 \\ \\ -2 LL(\hat{\psi} ; y_1,y_2,\ldots,y_j) &=& \sum_{j=1}^n \log(\hat{a}^2) + n +n\log(2\pi) \end{align}\)

For a linear model:

- \( y_{j} = F_j \, \phi + a \, \bar{\varepsilon_j} \quad ; \quad 1\leq j \leq n \)

Let

- \( F = \left(\begin{array}{c} F_1 \\ F_2 \\ \vdots \\F_n \\ \end{array} \right) \quad ; \quad y = \left(\begin{array}{c} y_1 \\ y_2 \\ \vdots \\y_n \\ \end{array} \right) \)

Then

- \(\begin{align} \hat{\phi} &=& (F^\prime F)^{-1} F^\prime y \\ \hat{a}&=& \frac{1}{n}\sum_{j=1}^n \left( y_j - F \hat{\phi})\right)^2 \end{align}\)

Computing the Fisher Information Matrix

Let $\psis$ be the true unknown value of $\psi$, and let $\hat{\psi}$ be the maximum likelihood estimate of $\psi$. If the observed likelihood function is sufficiently smooth, asymptotic theory for maximum-likelihood estimation holds and

- \( I_n(\psis)^{\frac{1}{2}}(\hat{\psi}-\psi{^\star}) \limite{n\to \infty}{} {\mathcal N}(0,{\rm Id}) \)

where

- \( I_n(\psi{^\star})= - \DDt LL(\psis;y_1,y_2,\ldots,y_n) \)

is the observed Fisher information matrix. Thus, an estimate of the covariance of $\hpsi$ is the inverse of the observed Fisher information matrix:

- \( C(\hpsi) = \left(- \DDt LL(\hpsi ; y_1,y_2,\ldots,y_n) \right)^{-1} \)

Deriving confidence intervals for the parameters

Let $\psi_k$ be the $k$th component of $\psi$, $k=1,2,\ldots,d$. $\psi_k$ is estimated by $\hpsi_k$, the $k$th component of $\hpsi$.

$\hpsi_k$ is a random variable that converges to $\psi_k^{\star}$ when $n \to \infty$.

An estimator of its variance is the $k$th element of the diagonal of $C(\hpsi)$:

- \( \widehat{\rm Var}(\hpsi_k) = C_{kk}(\hpsi) \)

We can then derive an estimator of its standard error

- \( \widehat{\rm s.e}(\hpsi_k) = \sqrt{C_{kk}(\hpsi)} \)

and a confidence interval for $\psi_k^\star$ constructed at a confidence level $\alpha$:

- \( {\rm CI}(\psi_k) = [\hpsi_k + \widehat{\rm s.e}(\hpsi_k)q((1-\alpha)/2) , \hpsi_k + \widehat{\rm s.e}(\hpsi_k)q((1+\alpha)/2)] \)

where $q(\alpha)$ is the quantile of order $\alpha$ of a ${\cal N}(0,1)$ distribution.

Remark:

- The normal distribution for $\hpsi/\widehat{\rm s.e}(\hpsi_k)$ is a "good" approximation only when the number of observations $n$ is large.

- Better approximation should be used for small $n$.

- In the model $y_j = f(t_j ; \phi) + a\teps_j$, the distribution of $\hat{a}^2$ can be approximated by a chi-square distribution with $(n-d_\phi)$ degrees of freedom, :where $d_\phi$ is the size of $\phi$.

- The quantiles of the normal distribution can then be replaced by the quantiles of a $t$-distribution with $(n-d_\phi)$ df.

Deriving confidence intervals for the predictions:

The regression model (or structural model) can be predicted for any $t$ by

- \( \hat{f}(t,\phi) = f(t ; \hphi) \)

It is then possible for any $t$ to derive a confidence interval for $f(t,\phi)$ using the estimated variance of $\hphi$.

Indeed, as a first approximation, we have:

- \( f(t ; \hphi) \simeq f(t ; \phi) + J_f(\phi) (\hphi - \phi) \)

where $J_f(t,\phi)$ is the Jacobian matrix of $f$, i.e. the matrix of all first-order partial derivatives of $f(t,\phi)$ with respect to the components of $\phi$ (the $k$th row of $J_f(t,\phi)$ is )

- \( f(t ; \hphi) \simeq f(t ; \phis) + \nabla f (t,\phis) (\hphi - \phis) \)

where $\nabla f(t,\phis)$ is the gradient of $f$, {\it i.e.} the vector of all first-order partial derivatives of $f(t,\phi)$ with respect to the components of $\phi$, obtained with $\phi=\phis$.

$\nabla f(t,\phis)$ can be estimated by $\nabla f(t,\hphi)$. We can then estimate the variance of $f(t ; \hphi)$ by

- \( \widehat{\rm Var}\left(f(t ; \hphi)\right) \simeq \nabla f (t,\hphi)\widehat{\rm Var}(\hphi) \left(\nabla f (t,\hphi) \right)^\prime \)

Estimating confidence intervals by Monte Carlo:

Estimating any distribution using Monte Carlo method does not require any approximation of the model.

We can easily estimate accurately the distribution of $\hpsi$ by simulating a large number $M$ of independent vectors of observations $(y^{(m)},1\leq m \leq M)$ using the estimated distribution of the observed vector $y=(y_j)$:

- \( y^{(m)}_j = f(t_j ;\hpsi) + g(t_j ;\hpsi)\teps^{(m)}_j \)

where $\teps^{(m)}_j \sim_{i.i.d.} {\cal N}(0,1)$.

We can then compute the MLE of $\psi$ for each of these replicates to derive an empirical estimation of the distribution of $\hpsi$. In particular, we can estimate any quantile of the distribution of $\hpsi_k$ using the empirical quantiles of $(\hpsi^{(m)}_k ,1\leq m \leq M)$.

Any confidence interval for $\psi_k$ (resp. $f(t,\psi_k)$) can then be approximated by a prediction interval for $\hpsi_k$ (resp. $f(t,\hpsi_k)$) .

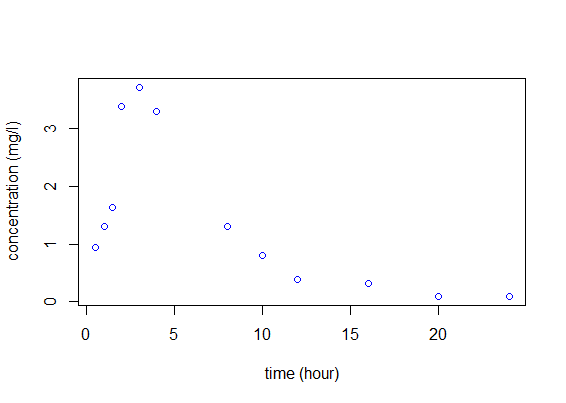

A PK example

| A dose of $D=50$ mg of a drug is administrated orally to a patient at time 0, Concentrations of the drug are measured at times (0.5, 1.0, 1.5, 2.0, 3.0, 4.0, 8.0, 10.0, 12.0, 16.0, 20.0, 24.0). | ||||

| ||||

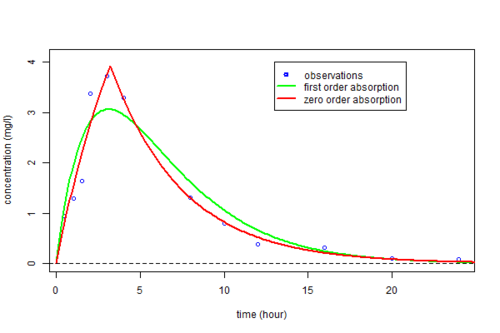

Two structural models:

- 1. One compartment model, first order absorption and linear elimination

- \(\begin{align} \phi_1 &=& (k_a, V, k_e) \\ f_1(t ; \phi_1) &=& \frac{D\, k_a}{V(k_a-k_e)} \left( e^{-k_e \, t} - e^{-k_a \, t}\right) \end{align}\)

- 2. One compartment model, zero order absorption and linear elimination

- \(\begin{align} \phi_2 &=& (T_{k0}, V, k_e) \\ f_2(t ; \phi_2) &=& \left\{ \begin{array}{ll} \frac{D}{V \,T_{k0} \, k_e} \left( 1- e^{-k_e \, t} \right) & {\rm if } t\leq T_{k0} \\[0.3cm] \frac{D}{V \,T_{k0} \, k_e} \left( 1- e^{-k_e \, T_{k0}} \right)e^{-k_e \, (t- T_{k0})} & {\rm otherwise} \end{array} \right. \end{align}\)

predc1=function(t,x){

} |

predc2=function(t,x){

} |

We define then models ${\cal M}_1$ and ${\cal M}_2$ by assuming constant error models:

- \(\begin{align} {\cal M}_1 : \quad y_j & = & f_1(t_j ; \phi_1) + a_1\teps_j \\ {\cal M}_2 : \quad y_j & = & f_2(t_j ; \phi_2) + a_2\teps_j \end{align}\)

We can fit these two models to our data by computing $\hpsi_1=(\hphi_1,\hat{a}_1)$ and $\hpsi_2=(\hphi_2,\hat{a}_2)$, the MLEs of $\psi$ under models ${\cal M}_1$ and ${\cal M}_2$.

| fmin1=function(x,y,t) {

} | |

| fmin2=function(x,y,t) {

} | |

| #-------- MLE --------# | |

pk.nlm1=nlm(fmin1,c(0.3,6,0.2,1),y,t,hessian="true") |

> cat(" psi1 =",psi1,"\n\n") psi1 = 0.3240916 6.001204 0.3239337 0.4366948 > cat(" psi2 =",psi2,"\n\n") psi2 = 3.203111 8.999746 0.229977 0.2555242

|

The estimated parameters $\hphi_1$ and $\hphi_2$ can then be used for computing predicted concentrations $\hat{f}_1(t)$ and $\hat{f}_2(t)$ under both models and for any time $t$. We clearly see here that a much better fit is obtained with model ${\cal M}_2$, i.e. assuming a zero order absorption process.

| tc=seq(from=0,to=25,by=0.1) fc1=predc1(tc,phi1) fc2=predc2(tc,phi2) plot(t,y,ylim=c(0,4.1),xlab="time (hour)",...

|

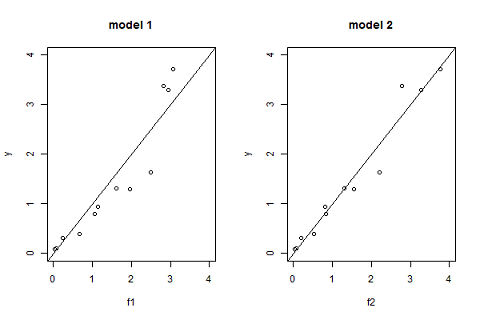

Another useful goodness of fit plot is obtained by displaying the observations $(y_j)$ versus the predictions $\hat{y}_j=f(t_j ; \hpsi)$ given by the model.

| pk.nlm1=nlm(fmin1,c(0.3,6,0.2,1),y,t,hessian="true") f2=predc2(t,phi2) par(mfrow= c(1,2)) plot(f1,y,xlim=c(0,4),ylim=c(0,4),main="model 1") abline(a=0,b=1,lty=1) plot(f2,y,xlim=c(0,4),ylim=c(0,4),main="model 2") abline(a=0,b=1,lty=1) |

Bayesian Information Criteria confirm the zero order absorption process should be selected.

|

> cat(" bic1 =",bic1,"\n\n") bic1 = 24.10972 > cat(" bic2 =",bic2,"\n\n") bic2 = 11.24769

|

We have only considered for the moment constant error models. Nevertheless, the graphic "observations vs predictions" seems to indicate that the amplitude of the residual errors increase with the prediction. We will then consider four different residual error models associated with the same structural model $f_2$.

| ${\cal M}_2$, | constant error model: | $y_j=f_2(t_j;\phi_2)+a_2\teps_j$ |

| ${\cal M}_3$, | proportional error model: | $y_j=f_2(t_j;\phi_3)+b_3f_2(t_j;\phi_3)\teps_j$ |

| ${\cal M}_4$, | combined error model: | $y_j=f_2(t_j;\phi_4)+(a_4+b_4f_2(t_j;\phi_4))\teps_j$ |

| ${\cal M}_5$, | exponential error model: | $\log(y_j)=\log(f_2(t_j;\phi_5)) + a_5\teps_j$ |

| fmin3=function(x,y,t) {

} |

| fmin4=function(x,y,t) {

} |

| fmin5=function(x,y,t) {

} |

We can now compute $\hpsi_3=(\hphi_3,\hat{b}_3)$, $\hpsi_4=(\hphi_4,\hat{a}_4,,\hat{b}_4)$ and $\hpsi_5=(\hphi_5,\hat{a}_5)$, the MLEs of $\psi$ under models ${\cal M}_3$, ${\cal M}_4$ and ${\cal M}_5$.

- f=predc2(t,x)

- g=abs(x[4])+abs(x[5])*f

e=sum( ((y-f)/g)^2 + log(g^2))

| fmin3=function(x,y,t) {

} | ||

| fmin5=function(x,y,t) {

} | ||

| #-------- MLE --------# | ||

pk.nlm3=nlm(fmin3,c(phi2,0.1),y,t,hessian="true") pk.nlm5=nlm(fmin5, c(phi2,0.1),y,t,hessian="true") bic5=deviance5 + log(n)*length(psi5) |

> cat(" bic3 =",bic3,"\n\n") bic3 = 3.443607 > cat(" bic4 =",bic4,"\n\n") bic4 = 3.475841 > cat(" bic5 =",bic5,"\n\n")

bic5 = 4.108521

| |

There is no obvious difference between these three error models. We will use the combined error model ${\cal M}_4$ in the following.

A 90% confidence interval for $\psi_4$ can derived from the Hessian matrix of the objective function.

| alpha=0.9 df=n-length(phi4) |